UniScientist: Advancing Universal Scientific Research Intelligence

2026-03-04

Advancing Universal Scientific Research Intelligence

via Evolving Polymathic Synthesis

The Next Frontier: Teaching Machines to Do Science

Scientific discovery drives civilizational progress, yet its pace is bottlenecked by the finite bandwidth of human cognition and the fragmentation of disciplinary expertise. LLM systems that can genuinely conduct research would represent a decisive step toward real-world automation at the frontier of human intellectual labor.

High-quality scientific data remains a critical bottleneck. Existing data construction paradigms fall into two extremes: purely human-authored datasets offer ecological validity and expert judgment but are expensive, unscalable, and bounded by individual annotators' disciplinary silos; purely synthetic pipelines scale effortlessly but lack the discriminative precision and domain grounding that only human expertise can provide.

We identify a fundamental asymmetry that neither paradigm exploits:

LLMs as Generators

LLMs possess broad, cross-disciplinary knowledge that enables diverse generation at scale — transcending the knowledge boundaries of any single human expert.

Humans as Verifiers

Human experts possess discriminative precision that ensures quality and groundedness. Verification is cognitively far cheaper than creation from scratch — an asymmetry existing pipelines fail to leverage.

Principled Division of Labor

This complementarity suggests a human-LLM collaborative data production paradigm that reassigns each agent to its comparative strength, yielding data of higher quality and broader coverage than either alone.

Applying this paradigm at scale, we produce a large-scale, research-level scientific training corpus spanning 10+ domains, each accompanied by structured rubric-based supervision. We then train UniScientist, an agentic model that leverages this data to acquire genuine research capabilities.

Formalizing Scientific Research: An Agentic Perspective

The first challenge is conceptual: how do you formalize "doing science"?

We model open-ended scientific research as Active Evidence Integration and Model Abduction. For a task q, the system maintains an evolving evidence state consisting of two complementary sets:

Two Sources of Evidence

Evidence-Grounded: Items whose correctness is independently verifiable — obtained from external sources or arising from internally produced results subjected to explicit checks and validation. This is the "standing on the shoulders of giants" part.

Formally-Derivable: Items obtained by reproducible procedures, including symbolic analysis, numerical computation, and simulation-based experimentation. This is the "doing the science yourself" part.

This formulation naturally supports an agentic view of scientific research. Constructing and refining the evidence state requires sequential, goal-directed control over information acquisition and experimentation under resource constraints, with adaptive updates driven by intermediate outcomes.

The overall process can be written as a dynamical system over evidence–hypothesis states: at each iteration, the system (1) acquires new goal-directed evidence and validates it, (2) derives new formally-derivable items through reproducible derivation or experimentation, and (3) abduces — updating the explanatory hypothesis to best account for the current evidence state. The process terminates once the evidence state is sufficiently complete and stable, after which everything is consolidated into a coherent scientific report.

Evolving Polymathic Synthesis: The Data Engine

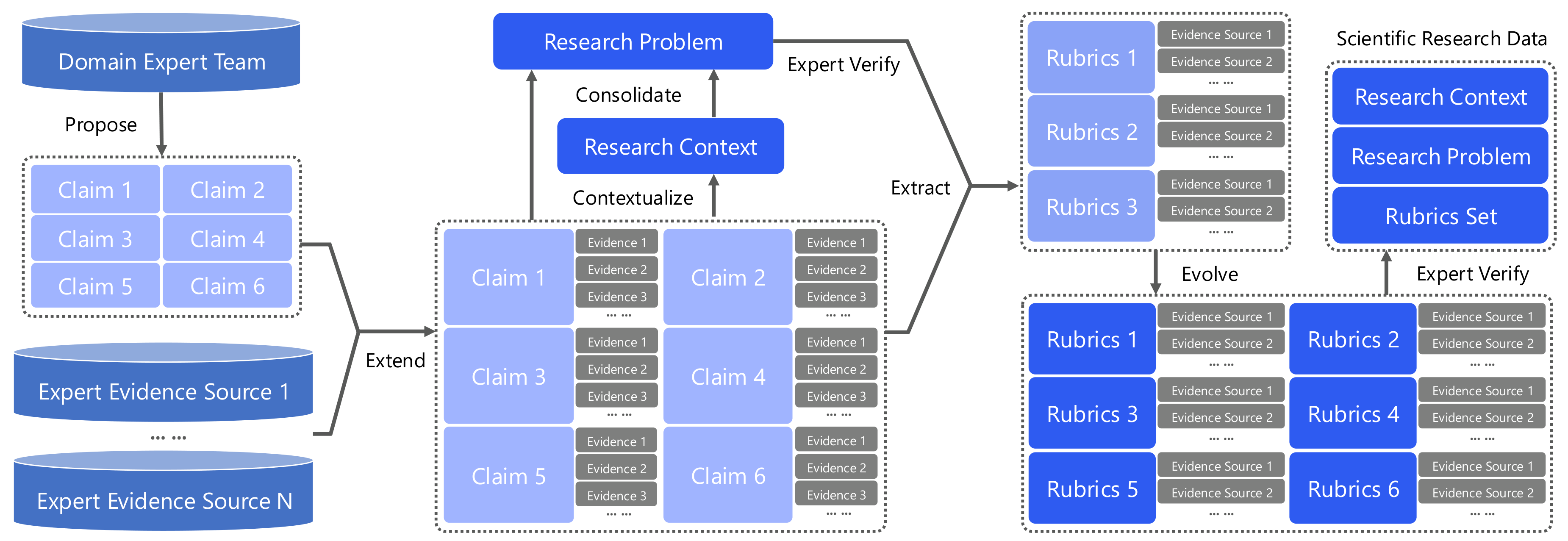

We propose the Evolving Polymathic Synthesis paradigm for synthesizing high-quality scientific research problems and their evaluation rubrics. It comprises two components: (i) extending validated scientific claims into open-ended research problems, and (ii) synthesizing rubrics that assess the completeness of candidate solutions.

From Scientific Claims to Research Problems

We aim to construct high-research-value problems across scientific domains. Each problem is grounded in prior literature and a well-specified research setting, requiring coordinated experimentation and principled derivations over multiple interdependent sub-questions.

Constructing a research problem proceeds in four stages:

Knowledge Expansion

Starting from multiple mutually related and pre-validated scientific claims, a search agent performs iterative retrieval over relevant evidence sources (scientific papers, authoritative websites). This stage continuously enriches the evidence pool associated with the claims.

Research Contextualization

Conditioned on the expanded evidence pool, an LLM constructs a coherent research context — including the research topic and background — to situate the claims within a well-defined scientific setting.

Problem Consolidation

Given the expanded evidence and contextualized setting, an LLM consolidates the relevant knowledge and abstracts it into a single, topic-coherent research problem, structured as multiple interdependent sub-questions whose solutions jointly address the overarching goal.

Refinement & Validation

LLMs refine and validate the problem specification, ensuring that it is well-posed, clearly stated, and substantively valuable. This yields research problems that instantiate the scientific research abilities formalized above.

Evolving Rubrics: Making the Unverifiable Verifiable

After initial feasibility and non-triviality screening, domain experts extract the key knowledge points required for solving the task, initializing the rubrics as a checklist of necessary evidence. A search agent then iteratively expands the rubrics conditioned on the research problem.

Each rubric: atomic, objective, evidence-grounded or formally derivable

Beyond the commonly accepted requirements for rubric design (grounded in expert guidance, comprehensive coverage, self-contained evaluation), we impose three additional desiderata:

Objective Consistency

For a fixed scientific report, repeated evaluations under the same rubric (performed K times) should yield consistent results, filtering out subjective or unstable criteria.

Discriminability

For reports exhibiting graded levels of completeness, rubric-based scores should meaningfully separate quality levels, filtering out trivial criteria.

Atomicity

Each rubric item should test a single knowledge point, avoiding composite criteria that simultaneously assess multiple claims — ensuring clean and verifiable evaluation units.

The rubrics are evolved and then subjected to joint refinement and verification by multiple LLMs and human experts. We adopt uniform weighting across items — the rubric set functions as a collection of unit tests over the key knowledge claims, turning an otherwise non-verifiable open-ended research task into an approximately verifiable one.

Learning to Integrate Collective Research Intelligence

Beyond standard agentic supervised fine-tuning, we introduce an additional learning objective to fully leverage collective research intelligence.

Report Aggregation Objective

Given a task q and N candidate research reports produced by different agents, the model learns to generate a consolidated report that integrates the best elements from all candidates. The reference for training is obtained via rubric-based rejection sampling — accepted only if its rubric score exceeds a predefined threshold.

This capability strengthens the agent's ability to assess the completeness and quality of research outputs, to reconsider competing perspectives, and to reorganize evidence and arguments — enabling the research quality to self-evolve over time.

This is central to scientific research practice: researchers routinely synthesize insights from multiple sources, evaluate conflicting findings, and consolidate the best evidence into a coherent narrative.

The Universal Scientific Research Dataset

Applying the Evolving Polymathic Synthesis paradigm at scale, we construct a large-scale, research-level scientific training corpus:

First-level domain distribution

The paradigm covers the full breadth of scientific domains, spanning topics from quantum physics and organic chemistry to sociocultural anthropology and computational linguistics, with further coverage including immunology, geophysics, materials science, and many others.

Experiments

We fine-tuned Qwen3-30B-A3B-Thinking-2507 on an NVIDIA H200 GPU cluster for approximately 1,200 GPU-hours, yielding UniScientist. We set the maximum context length to 128k tokens and allowed up to 100 tool-invocation steps per task. The enabled tool suite comprises web_search, google_scholar, page_fetching, and code_interpreter.

Benchmarks

We evaluate UniScientist on five representative benchmarks:

- FrontierScience-Research [1] — closest in task form to our data (in-domain); however, our data targets broader coverage while FrontierScience-Research is largely confined to physics, chemistry, and biology

- FrontierScience-Olympiad [1] — scientific knowledge QA rather than research-style problem solving (out-of-domain)

- DeepResearch Bench [2] / DeepResearch Bench II [3] / ResearchRubrics [4] — general-purpose research and information integration capability assessment (out-of-domain)

Main Results

- UniScientist achieves top-tier performance across all five benchmarks. Even on out-of-domain benchmarks, substantial gains over the base model demonstrate that our Evolving Polymathic Synthesis data is broadly generalizable.

- A 30B-parameter model (UniScientist-30B-A3B) achieves 28.3 on FrontierScience-Research — surpassing Claude Opus 4.5 (17.5), Gemini 3 Pro (12.4), and GPT-5.2 completion mode (25.2). With test-time scaling, it reaches 33.3.

- The improvement is not limited to stronger tool use: under tool-free evaluation, UniScientist still delivers clear gains over its base model, indicating enhanced intrinsic scientific research ability.

- On out-of-domain benchmarks — DeepResearch Benchmark, DeepResearch Bench II, and ResearchRubrics — UniScientist delivers performance on par with a range of top-tier closed-source models.

Inference Case Study

This section visualizes one full inference case in an interpretable trajectory.

answer

Rubric items 10

Rubric points 10.0

Final answer chars 17031

Question (full task prompt)

Per-round tool interaction trajectory (22 rounds)

Round 01 · search

searchRaw payload

{

"arguments": "{\"query\": [\"meso-nitrogen atoms phthalocyanine\", \"modification of meso-nitrogen atoms nickel phthalocyanine\", \"phthalocyanine meso position\"]}",

"name": "search"

}

Round 02 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://www.nature.com/articles/s41467-025-58419-9\"], \"goal\": \"Get details on synthetic methodology for meso-position editing of phthalocyanine derivatives, focusing on modification of meso-nitrogen atoms in nickel(II) phthalocyanine.\"}",

"name": "visit"

}

Round 03 · google_scholar

google_scholarRaw payload

{

"arguments": "{\"query\": [\"meso nitrogen phthalocyanine electronic structure\", \"tetrabenzodiazanorcorrole\", \"phthalocyanine meso position modification\"]}",

"name": "google_scholar"

}

Round 04 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://pubs.acs.org/doi/abs/10.1021/acs.inorgchem.5b00380\"], \"goal\": \"Get details on electrochemical and spectroscopic implications of meso CH group replacement with nitrogen in phthalocyanine derivatives.\"}",

"name": "visit"

}

Round 05 · search

searchRaw payload

{

"arguments": "{\"query\": [\"meso nitrogen replacement phthalocyanine\",\"aza-substitution meso phthalocyanine\",\"meso position modification phthalocyanine\"]}",

"name": "search"

}

Round 06 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://repositorio.imdeananociencia.org/bitstreams/545ddabb-87b0-4571-a674-5344e499815a/download\"], \"goal\": \"Get information about aromaticity signatures in UV-Vis absorption spectra of phthalocyanines with meso substitution.\"}",

"name": "visit"

}

Round 07 · search

searchRaw payload

{

"arguments": "{\"query\": [\"s41467-025-58419-9 supplementary\",\"tetrabenzodiazanorcorrole electronic structure\",\"meso-edited phthalocyanine derivatives electronic structure\"]}",

"name": "search"

}

Round 08 · search

searchRaw payload

{

"arguments": "{\"query\": [\"s41467-025-58419-9 pdf\",\"tetrabenzodiazanorcorrole 2 structure\"]}",

"name": "search"

}

Round 09 · google_scholar

google_scholarRaw payload

{

"arguments": "{\"query\": [\"tetrabenzodiazanorcorrole 16 pi electron\",\"tetrabenzotriazacorrole 17 pi electron\",\"meso-edited phthalocyanine electronic structure\"]}",

"name": "google_scholar"

}

Round 10 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://www.sciencedirect.com/science/article/pii/S0010854514002902\"], \"goal\": \"Get details on tetrabenzotriazacorrole (TBC) synthesis, electronic structure, aromaticity, spectroscopic properties, and reactivity.\"}",

"name": "visit"

}

Round 11 · search

searchRaw payload

{

"arguments": "{\"query\": [\"tetrabenzotriazacorrole synthesis\",\"tetrabenzodiazanorcorrole synthesis\"]}",

"name": "search"

}

Round 12 · search

searchRaw payload

{

"arguments": "{\"query\": [\"phthalocyanine 18 pi electron aromatic\",\"phthalocyanine antiaromatic 16 pi\",\"norcorrole 16 pi electron\"]}",

"name": "search"

}

Round 13 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://www.sciencedirect.com/science/article/pii/S2041652024006448\"], \"goal\": \"Get details on antiaromatic norcorroles, 16 π-electron systems, and their properties.\"}",

"name": "visit"

}

Round 14 · search

searchRaw payload

{

"arguments": "{\"query\": [\"norcorrole 16 pi electron arXiv\",\"tetrabenzodiazanorcorrole arXiv\"]}",

"name": "search"

}

Round 15 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://www.beilstein-journals.org/bjoc/articles/20/172\"], \"goal\": \"Get details on antiaromatic Ni(II) norcorroles, their electronic structure, aromaticity, spectroscopic properties, and reactivity.\"}",

"name": "visit"

}

Round 16 · google_scholar

google_scholarRaw payload

{

"arguments": "{\"query\": [\"tetrabenzotriazacorrole 17 pi electron\",\"17 pi electron corrole radical\"]}",

"name": "google_scholar"

}

Round 17 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://www.worldscientific.com/doi/abs/10.1142/S1088424623300057\"], \"goal\": \"Get details on corrole radicals, electronic structure, aromaticity, spectroscopic properties, and reactivity.\"}",

"name": "visit"

}

Round 18 · search

searchRaw payload

{

"arguments": "{\"query\": [\"tetrabenzotriazacorrole DFT\",\"tetrabenzodiazacorrole electronic structure\"]}",

"name": "search"

}

Round 19 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://pmc.ncbi.nlm.nih.gov/articles/PMC11954904/\"], \"goal\": \"Get full text of the Nature Communications article on nickel(II)-containing meso-edited phthalocyanine derivatives, including details on electronic structure, aromaticity, spectroscopic properties, reactivity, and synthetic methodology.\"}",

"name": "visit"

}

Round 20 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://en.wikipedia.org/wiki/Phthalocyanine\"], \"goal\": \"Get details on phthalocyanine electronic structure, aromaticity, spectroscopic properties.\"}",

"name": "visit"

}

Round 21 · search

searchRaw payload

{

"arguments": "{\"query\": [\"corrole aromaticity 18 pi\",\"norcorrole antiaromatic 16 pi\"]}",

"name": "search"

}

Round 22 · visit

visitRaw payload

{

"arguments": "{\"url\": [\"https://pmc.ncbi.nlm.nih.gov/articles/PMC11110129/\"], \"goal\": \"Get details on norcorrole antiaromaticity, 16 π-electron system, magnetic criteria.\"}",

"name": "visit"

}

Scoring rubric breakdown

Final answer (model prediction)

Discussions

Code Interpreter Matters

We equip UniScientist with code_interpreter as a first-class tool that enables executable and reproducible computation. While a model may approximate "mental simulations" through internal deliberation, such purely textual reasoning is neither efficient nor reliably calibrated for many scientific domains.

From an agentic perspective, code_interpreter turns the research loop from narrative reasoning into an iterative test–revise procedure: hypotheses are not only proposed but also instantiated as computations whose outcomes can confirm, refute, or sharpen competing explanations. This is essential for scientific research agents, where progress often hinges on running targeted analyses and simulations to validate ideas under explicit constraints.

From Synthesized Data to Scientific Research Ability

Our constructed research instances exhibit a mixed structure rather than decomposing into fully independent queries. Later steps often build on earlier results, intermediate evidence, or clarified assumptions, while certain components can be advanced concurrently. The problem specification is intentionally partially instantiated: it fixes a concrete setting but does not close the information loop.

Completing each task therefore requires targeted acquisition and validation of additional facts, alongside generating new, formally justified evidence — these intermediate outcomes, in turn, shape what to seek or test next. In this way, each instance instantiates the agentic evidence–hypothesis loop. The rubrics translate this process into supervision by specifying checkable competency items aligned with the key steps of research.

Beyond the Knowledge Limits of a Single Expert

Domain experts typically offer substantial depth within a narrow specialty, but cannot maintain comparable coverage across many disciplines. Our Evolving Polymathic Synthesis paradigm reconfigures this division of labor: LLM agents synthesize candidate research problems by leveraging broad cross-disciplinary coverage and scalable information acquisition, while human experts act primarily as high-precision reviewers who adjudicate solvability, non-triviality, and research value.

This shift substantially improves the throughput and controllability of the synthesis pipeline, supporting continuous construction of high-value research problems across the full scientific landscape.

Case Studies

Full Case Prompt

Predicting stereochemical outcomes in thermal electrocyclic cascades is often harder than applying the Woodward–Hoffmann rules (e.g., 8π conrotatory, 6π disrotatory) because multiple conformers, symmetry-degenerate rotation modes, and torquoselective effects can make “allowedness” insufficient to determine product ratios. A common unresolved case involves thermolysis of a geometrically defined linear tetraene—(Z,Z,Z,E)-deca-2,4,6,8-tetraene—reported to undergo an 8π/6π electrocyclization cascade in which the first ring closure gives a single stereoisomeric intermediate, yet the second ring closure furnishes two distinct final stereoisomers with a non-intuitive 3:1 ratio rather than 1:1. Resolving whether such a ratio is intrinsic (symmetry/statistics/kinetics) or artifactual requires connecting orbital-symmetry reasoning to quantitative kinetics and experimentally observable intermediates/products.

Use analytically pure (≥98%) (Z,Z,Z,E)-deca-2,4,6,8-tetraene (track “terminal methyl groups” at C1/C10 as stereochemical markers). Run thermal reactions at 0.050 M in toluene-d8 in sealed tubes under N2 at 120.0 ± 0.5 °C. Collect timepoints at t = 0, 5, 15, 30, 60, 120 min, and 24 h (n = 3 independent replicates). Quantify products by GC-FID with an internal standard (e.g., n-dodecane) and confirm by GC–MS; target method precision RSD ≤ 5% across replicates. Assign stereochemistry by 1H/13C NMR plus at least one 2D experiment (NOESY/ROESY); if enantiomeric outcomes are possible, include chiral GC/HPLC or derivatization. Interrogate the proposed “single stereoisomeric intermediate” via in situ/time-resolved NMR and/or rapid thermal quench experiments. For mechanistic adjudication, compute all symmetry-unique 8π and 6π transition structures (including conrotatory/disrotatory possibilities as relevant) at M06-2X/def2-TZVP with SMD(toluene), verify by IRC, and report ΔG‡ at 393 K. The key deliverable is a mechanistically justified explanation (or falsification) of the observed A:B ratio that is consistent with torquoselectivity, symmetry-degenerate pathways, and Curtin–Hammett/kinetic control.

- Pathway enumeration and stereochemical mapping: Enumerate all symmetry-distinct electrocyclization pathways (including symmetry-equivalent rotation modes and conformationally distinct but rapidly interconverting precursors) from the tetraene to the two final stereoisomers. For each, map rotation sense to product configuration using an explicit orbital-correlation/FMO argument, and state whether any “distinct pathways” converge to the same observable product (including enantiomeric vs diastereomeric outcomes that change pathway counting but not GC/NMR integrals).

- Ratio models tied to observables: Construct (a) a statistical degeneracy model (equal rate constants for symmetry-distinct pathways) and (b) a kinetic model parameterized by computed ΔG‡ values (Curtin–Hammett where appropriate). For each model, specify assumptions, derive the predicted A:B ratio from pathway weights (including how intermediate formation/consumption affects the integrated product ratio), and identify which assumptions are necessary to obtain 3:1 rather than 1:1.

- Experimental discrimination and controls: Using the sampling/analytics above, propose an analysis plan that (i) tests whether the intermediate is truly a single stereoisomer (or a rapidly equilibrating set), (ii) estimates time-dependent concentrations and A:B with uncertainty (replicate error propagation), and (iii) distinguishes the models in (2). Include at least one control that probes the origin of selectivity (e.g., temperature variation to separate kinetic vs thermodynamic control, and/or photochemical activation to invert conrotatory/disrotatory expectations) and define objective decision criteria (goodness-of-fit or model selection) for accepting/rejecting each mechanistic picture.

- Robustness/sensitivity: Specify how conclusions about mechanism and A:B depend on (i) conformer population assumptions (pre-equilibrium vs non-equilibrium), (ii) plausible DFT variability (at least one alternative functional/basis check), and (iii) analytical quantification uncertainty (GC response factors, integration, and RSD), and state what outcomes would constitute a robust falsification of an intrinsic 3:1 selectivity.

Full Rubrics (31 items)

- Include the Woodward-Hoffmann/FMO argument for thermal allowedness of 8pi conrotatory and 6pi disrotatory electrocyclizations, with explicit reference to HOMO nodal patterns. wiley.com

- Include explicit atom-tracking of terminal methyl groups (C1 and C10) through both electrocyclization steps, including which carbons form the new sigma bond in the 8pi step and resulting cis/trans relationships. oregonstate.edu

- Enumerate all symmetry-distinct 8pi conrotatory rotation modes (e.g., clockwise vs counterclockwise) and state whether they are symmetry-equivalent, enantiomer-forming, or distinct under achiral conditions. wiley.com

- Consider conformationally distinct tetraene precursors (rotamers) that can undergo 8pi closure and discuss how rapid interconversion at 120 degC affects pathway counting (Curtin-Hammett scenario). oregonstate.edu

- Map each conrotatory rotation sense in the 8pi step to intermediate configuration using orbital-phase correlation and conclude whether the two rotations yield the same stereoisomer or enantiomers. wiley.com

- Enumerate all symmetry-distinct 6pi disrotatory modes from the 8pi intermediate and map each disrotation sense to final product A or B, including terminal methyl relative configuration. wiley.com

- State whether the 8pi intermediate is a single stereoisomer, a racemic pair, or a rapidly equilibrating set, and connect this to distinct 6pi pathways. oregonstate.edu

- Identify which distinct pathways lead to the same observable product (enantiomers vs diastereomers) and implications for achiral GC-FID/NMR integrals. oregonstate.edu

- Include an explicit kinetic scheme for the cascade (S -> I via 8pi, I -> A and I -> B) with reversibility assumptions and branching point. oregonstate.edu

- Derive product ratio A:B = gA:gB from statistical degeneracy with equal pathway rate constants. wiley.com

- List assumptions of statistical degeneracy model (equal barriers, no torquoselectivity, no conformer bias, no post-formation interconversion, identical detectability) and which must be violated for 3:1 instead of 1:1. wiley.com

- Calculate activation free-energy difference corresponding to 3:1 at 393 K (DeltaDeltaG double-dagger ~= 0.86 kcal/mol). academia.edu

- Provide Curtin-Hammett expression for A:B with fast pre-equilibrium conformers/intermediates and discuss when conformer populations cancel. oregonstate.edu

- Derive or state time-integrated A:B for consecutive reaction with single intermediate and irreversible branching, and discuss effects of reversibility/multiple intermediates. oregonstate.edu

- Include experimental plan under specified conditions: 0.050 M in toluene-d8, sealed tubes under N2, 120.0 +/- 0.5 degC, timepoints 0/5/15/30/60/120 min and 24 h, n=3 replicates. oregonstate.edu

- Include GC-FID quantification with internal standard and GC-MS confirmation, ensuring RSD <= 5% across replicates. oregonstate.edu

- Propose strategy (in situ/time-resolved NMR or rapid thermal quench) to detect and quantify intermediate, specifying monitored signals and concentration determination. oregonstate.edu

- Provide objective criteria for deciding single stereoisomeric intermediate vs multiple species (NMR signal sets, GC peaks, exchange broadening, etc.). oregonstate.edu

- Include stereochemical assignment strategy using 1H/13C NMR and at least one 2D experiment (NOESY/ROESY), correlating NOE/ROE to terminal methyl orientations. oregonstate.edu

- Include plan for enantiomeric outcomes (chiral GC/HPLC or derivatization) and explain how enantiomers vs diastereomers affect achiral integrals. oregonstate.edu

- Describe fitting concentration-time data to statistical and DFT-parameterized models and estimating parameter uncertainties. oregonstate.edu

- Define objective model-selection criteria (goodness-of-fit, AIC/BIC, CI for ratios) for accepting/rejecting mechanistic pictures.

- Propose at least one control experiment (temperature variation and/or photochemical activation) and predicted diagnostic outcomes for competing mechanisms. oregonstate.edu

- Perform sensitivity analysis on conformer population assumptions (fast pre-equilibrium vs non-equilibrated conformers) and impact on A:B. oregonstate.edu

- Propose plan to estimate conformer populations/interconversion rates at 120 degC (Boltzmann populations, VT-NMR, or kinetic analysis). oregonstate.edu

- Quantify A:B sensitivity to DeltaDeltaG double-dagger uncertainty using A/B = exp(-DeltaDeltaG double-dagger/RT) at 393 K, with numerical example for +/-0.5 to 1.0 kcal/mol. academia.edu

- Discuss sensitivity to pathway-enumeration assumptions (symmetry, enantiomers, conformers) and observations that resolve degeneracy. oregonstate.edu

- Address analytical quantification uncertainty (GC response factors, integration, co-elution, internal standard, and RSD <= 5%) and impact on A:B uncertainty. oregonstate.edu

- List robustness criteria for intrinsic 3:1 selectivity (e.g., CI excluding 1:1, agreement between methods, time-invariance).

- Provide falsification criteria for intrinsic 3:1, including at least two specific outcomes (e.g., response-factor correction yields 1:1, time drift, intermediate multiplicity, chiral splitting). oregonstate.edu

- Discuss how temperature dependence of A:B distinguishes statistical degeneracy (constant) from kinetic control (varies with 1/T). academia.edu

Full Case Prompt

Insect‑pollinated plants can decline when positive feedbacks from pollinating mutualists are insufficient to offset negative effects from nectar thieves and herbivores. In mathematical ecology, coupled ordinary differential equation (ODE) models are commonly used to (i) determine whether a plant–insect community admits a feasible, locally stable coexistence equilibrium and (ii) quantify “persistence thresholds” (minimum mutualist abundance needed to avoid plant collapse) under antagonistic pressure—information directly relevant to restoration choices such as augmenting pollinators versus suppressing herbivores.

Consider a deterministic, well‑mixed community with one focal plant \(P(t)\), three adult mutualist pollinators \(x_1(t),x_2(t),x_3(t)\), and three adult antagonists \(y_1(t),y_2(t),y_3(t)\) (interpretable as two nectar thieves plus one herbivore). A seventh insect species is assumed biogeographically absent and is excluded by setting its state identically to zero. Plant dynamics follow logistic growth with additive insect effects: \[ \frac{dP}{dt}= rP\Bigl(1-\frac{P}{K}\Bigr) + \alpha_1 x_1+\alpha_2 x_2+\alpha_3 x_3+\delta_1 y_1+\delta_2 y_2+\delta_3 y_3, \] with \(r=0.8,\;K=500\), \((\alpha_1,\alpha_2,\alpha_3)=(0.3,0.25,0.35)\) and \((\delta_1,\delta_2,\delta_3)=(-0.4,-0.3,-0.5)\). Mutualist dynamics use a Lotka–Volterra–style self‑limitation plus plant‑mediated benefit: \[ \frac{dx_i}{dt}=c_i x_i\Bigl(1-\sum_{k=1}^3 a_{ik}x_k\Bigr)+m_i P x_i,\quad i\in\{1,2,3\}, \] with purely intraspecific density dependence \(a_{ik}=\mathbf{1}[i=k]\) (so \(\sum_k a_{ik}x_k=x_i\)), \((c_1,c_2,c_3)=(0.4,0.35,0.45)\), and \((m_1,m_2,m_3)=(0.05,0.04,0.06)\). Antagonist dynamics are \[ \frac{dy_j}{dt}= d_j y_j - p_j y_j^2 - n_j P y_j,\quad j\in\{1,2,3\}, \] with \((d_1,d_2,d_3)=(0.3,0.25,0.35)\), \((p_1,p_2,p_3)=(0.02,0.015,0.025)\), and \((n_1,n_2,n_3)=(0.03,0.025,0.035)\). Baseline initial conditions at \(t=0\) are \(P=300\), \((x_1,x_2,x_3)=(40,35,45)\), \((y_1,y_2,y_3)=(50,55,60)\). Numerical experiments should integrate on \(t\in[0,100]\) (e.g., Python solve_ivp on a fixed output grid with stated tolerances), and assess plant persistence over a terminal window (e.g., \(\min_{t\in[80,100]}P(t)\) relative to an explicit extinction threshold) alongside local stability via Jacobian eigenvalues at candidate equilibria. Controls/ablations may include removing herbivory (e.g., setting \(y_3\equiv 0\) or \(\delta_3=0\)) to isolate antagonistic pressure.

- Operational definitions for reproducible evaluation. Restate the model in a fixed state vector order \((P,x_1,x_2,x_3,y_1,y_2,y_3)\). Specify precise mathematical criteria for (a) feasibility/positivity (including how you treat numerical negativity), (b) plant persistence in finite‑horizon simulations (including an explicit extinction threshold and time window), and (c) local stability at equilibrium (Jacobian eigenvalues). Briefly justify why these criteria are appropriate for threshold and stability claims in well‑mixed ODE community models.

- Equilibria and instability mechanism (community matrix reasoning). Enumerate the extinction and boundary equilibria that are structurally implied by the functional forms, and characterize conditions under which a positive coexistence equilibrium can exist. Derive the Jacobian (community matrix) structure at a generic equilibrium and identify which blocks/couplings can create positive feedback leading to instability. Connect the identified mechanism to at least one established result from the Lotka–Volterra mutualism stability literature (e.g., classic stability conditions or known routes to bistability/instability) and explain how the present model’s self‑limitation and cross‑coupling terms fit that framework.

- Persistence threshold as a bifurcation‑like phenomenon. Design a reproducible protocol to estimate a critical mutualist augmentation threshold \(s^*\), where \((x_1(0),x_2(0),x_3(0))=s\cdot(40,35,45)\) and antagonist initial conditions remain fixed. Your protocol should include: (i) a bracketing/bisection (or equivalent) strategy linked directly to your persistence definition; (ii) at least one antagonism‑isolating control (e.g., remove herbivory via \(\delta_3=0\) or \(y_3\equiv 0\)); and (iii) a diagnostic to distinguish a transcritical transition from a saddle‑node (bistability) scenario (e.g., dependence on initial conditions, equilibrium continuation, or changes in Jacobian eigenvalues).

- Intervention choice and robustness. Compare a small, ecologically interpretable set of single‑lever interventions restricted to parameters already in the model—e.g., reducing plant damage from the herbivore (\(\delta_3\) or the herbivore’s \(d_3,p_3,n_3\)) versus increasing mutualist benefit (\(\alpha_i\) and/or \(m_i\)). Select the intervention that most robustly yields plant persistence and local stability, and specify how you will demonstrate robustness (at minimum: a ±10% global parameter perturbation sensitivity analysis and an initial‑condition perturbation test, plus a numerical‑method check such as solver/tolerance comparison).

Full Rubrics (24 items)

- Include full ODE system in fixed state order (P, x1, x2, x3, y1, y2, y3) with all parameter values. joneslabbowdoin.com

- Include reproducible numerical integration spec for t in [0,100], fixed output grid/sampling rule, and explicit solve_ivp tolerances. researchgate.net

- Include precise equilibrium definition for numerical work (e.g., solve F(z)=0 with ||F|| < tau) with stated tau. nature.com

- Include local asymptotic stability criterion in Jacobian eigenvalue terms (all real parts negative), with numerical margin/tolerance. nature.com

- Include ecological interpretations of r (intrinsic growth rate) and K (carrying capacity) in plant dynamics. wiley.com

- Enumerate structurally implied equilibrium types, including plant-only equilibria (P*=0 and P*=K) and boundary equilibria with subsets of insects absent. nature.com

- Include derived mutualist equilibrium conditions from dxi/dt=0: xi*=0 or xi*=1+(mi/ci)P* for xi*>0. nature.com

- Include derived antagonist equilibrium conditions from dyj/dt=0: yj*=0 or yj*=(dj-njP*)/pj for yj*>0, with feasibility dj>njP*. wiley.com

- Explicitly state positive coexistence equilibrium can be found by substituting xi*(P*) and yj*(P*) into plant equilibrium equation, subject to positivity constraints. nature.com

- Include full Jacobian/community matrix with all nonzero partial derivatives consistent with model. nature.com

- Identify explicit positive-feedback instability mechanism from mutualist loop (P -> xi via mi xi >0 and xi -> P via alphai>0). nature.com

- Identify explicit antagonist feedback mechanism with mutual inhibition (P -> yj via -nj yj<0 and yj -> P via deltaj<0), including potential for bistability/instability. wiley.com

- Include exact mutualist-scaling experiment: (x1(0),x2(0),x3(0))=s*(40,35,45), P(0)=300, y fixed at (50,55,60). wiley.com

- Include reproducible persistence indicator tied to min_{t in [80,100]} P(t) compared to explicit Pext. wiley.com

- Include explicit bracketing strategy to find [slow,shigh] with opposite persistence outcomes, starting at s=0 and expanding geometrically. researchgate.net

- Include bisection update rule based on persistence indicator, with stopping criterion on s tolerance and max iterations. researchgate.net

- Include Jacobian/eigenvalue diagnostic near threshold, tracking leading eigenvalue at relevant equilibrium and zero crossing behavior. nature.com

- Include clear threshold output: final bracket [slow,shigh], point estimate s*, uncertainty width, and terminal outcomes used for classification. wiley.com

- Include explicit success criterion requiring both persistence and feasible + locally asymptotically stable coexistence equilibrium. nature.com

- Include quantitative intervention comparison basis, e.g., reduction in estimated critical s* or expansion of persistence region under stronger antagonism. researchgate.net

- Select exactly one parameter-level intervention (parameter, direction, magnitude) and show it yields persistence plus feasible LAS coexistence under baseline conditions. wiley.com

- Include initial-condition perturbation test design (how P(0), x(0), y(0) are perturbed, number of trials) and whether conclusions hold. wiley.com

- Include numerical-method check comparing at least two solver/tolerance setups (e.g., RK45 vs BDF) and report consistency in persistence/stability conclusions. researchgate.net

- Include at least one baseline/control comparison demonstrating intervention effect size (or antagonism-removal ablation such as delta3=0 or y3=0). wiley.com

Full Case Prompt

High-consequence, coupled simulations are increasingly used to support operational decisions in complex socio-technical infrastructure, but their predictive use is often limited by weak verification and validation (V&V), unclear validity domains, and poorly characterized uncertainty propagation across coupled scales. This study concerns a generic “coupled infrastructure” simulator intended for decision support under disruptions (including scenario-based stress testing), where full-system ground-truth experiments are infeasible and credibility must be built via a building-block (tiered) validation hierarchy.

The simulator couples: (i) a microscopic/mesoscopic agent-based urban mobility module, (ii) a steady-state electric distribution network module, and (iii) an interdependency layer linking traffic conditions to EV charging demand and grid constraints. Validation is organized into three fixed tiers: Tier U (unit problems), Tier B (benchmark cases), and Tier S (subsystem cases). Candidate system response quantities (SRQs), reported per tier where meaningful, are fixed as: SRQ1 mean corridor travel time over a 2‑hour peak period; SRQ2 95th percentile queue length at a signalized intersection; SRQ3 feeder maximum line loading (% of rating) over the same window; SRQ4 number of EV charging sessions and peak charging power.

Mobility observations include 28 weekdays of loop-detector counts/speeds aggregated at 1‑min resolution for 12 links, plus video-derived turning counts at 0.2 Hz for 3 intersections (annotated count uncertainty ±5%). Grid observations include SCADA node voltages and feeder currents at 1‑min resolution for 15 nodes (instrument accuracy ±0.5% voltage, ±1% current).

At most two designed field interventions plus passive observation may be conducted; downtime must be <2 hours per site. The two available intervention designs are: E1 (mobility) modify signal timing plans at 3 intersections for 10 weekdays with before/after measurement; E2 (grid/EV) apply a time-of-use price signal to a pilot fleet of 60 EVs for 14 days and log charging start times and power. At least one explicit control condition (e.g., matched baseline windows or a no-change scenario) is required.

Inputs to be treated as aleatory/epistemic with justification include: demand multiplier α∈[0.85,1.15], driver value-of-time β∈[0.6,1.4], EV arrival rate λ_EV∈[0.7,1.3]×baseline, and feeder equivalent impedance scaling z∈[0.9,1.1]. Uncertainty propagation must use Monte Carlo sampling with N_MC=10,000 per tier plus a Latin hypercube robustness check. Global sensitivity analysis must produce rank-ordered input importance for each SRQ (e.g., Sobol’ total-effect indices or a clearly justified alternative). Validation experiments must be jointly designed and documented by experimentalists, model developers, code developers, and end users, including measurement uncertainty, boundary/initial conditions, and an explicit intended validity domain.

- Hierarchy design and inference: Propose a concrete Tier U/Tier B/Tier S decomposition for (a) mobility, (b) grid, and (c) the coupling layer. For each tier, specify which SRQs are in-scope, which empirical datasets support validation, and what upward inference is warranted (i.e., what can be claimed one tier up if agreement is achieved at this tier, and what cannot).

- Validation experiment design under constraints: Specify how E1 and/or E2 will be jointly designed (including at least one control condition) to capture the essential behaviors/physics of interest while minimizing confounding. Include boundary/initial condition specification, an uncertainty budget tied to the stated measurement accuracies, replication/segmentation rules (e.g., day-type screening), and at least one pre-registered inclusion/exclusion rule that prevents post hoc selection.

- Validation metrics and decision logic: Define a small set of quantitative validation metrics suitable for SRQ1–SRQ4 across tiers (time-series and/or distributional as appropriate), along with tier-specific acceptance/credibility thresholds that are consistent with intended operational use. Evaluate at least one competing metric choice or decision threshold as a planned robustness/control comparison.

- UQ and global sensitivity integration: Provide a tier-by-tier workflow for propagating uncertainty (N_MC=10,000 plus Latin hypercube check) to produce SRQ uncertainty bands and for computing rank-ordered global sensitivities (e.g., Sobol’ total-effect). Explain how sensitivity results will be used to (i) prioritize additional measurements or redesign experiments within the downtime/budget constraints and (ii) constrain calibration to reduce overfitting. State what robustness-check outcomes would materially change the credibility conclusion.

- V&V separation and governance: Provide a governance plan that separates software verification, numerical verification, calibration, and validation across tiers, including roles for experimentalists, model developers, code developers, and end users. Specify required artifacts (versioning, run provenance, validation data package, uncertainty budget, scenario definitions) and a pre-specified adjudication rule for conflicting evidence across tiers without goal-shifting.

Full Rubrics (25 items)

- Include Tier U/Tier B/Tier S decomposition for grid module, naming unit feeder tests, canonical feeder benchmark, and feeder subsystem aligned with SCADA nodes.

- Define tier-aware intended validity domain (weekday peak windows, alpha/beta/lambdaEV/z ranges) and explain how tier evidence supports extrapolation limits for disruption scenarios. osti.gov ac.uk

- Include at least one cross-tier consistency check (e.g., Tier-U calibrated parameters held fixed when assessing Tier B/S). osti.gov

- Specify whether E1, E2, or both are executed; justify the joint design for isolating essential behaviors and minimizing confounding; include joint design process across four stakeholder groups. osti.gov ac.uk

- Specify boundary and initial condition documentation for each intervention (demand boundary, signal timing plans, feeder topology, baseline TOU, initial queue/feeder state). osti.gov

- Include uncertainty budget tied to provided measurement accuracies (+/-5% turning counts, +/-0.5% voltage, +/-1% current) and how these enter validation comparisons. osti.gov

- Explicitly incorporate hard constraints (max two interventions, <2h downtime/site) and describe implementation feasibility.

- Describe how interventions support validation of SRQs (E1 for SRQ1/SRQ2, E2 for SRQ4 and downstream SRQ3), and state SRQs not validated by each intervention and why. osti.gov

- Specify stakeholder roles and deliverables for measurement plan, scenario definition, and data package documentation. osti.gov ac.uk

- Define validation metrics with explicit computation rules (e.g., RMSE, MAPE, KS distance, coverage probability). osti.gov

- Specify tier-specific acceptance thresholds (U/B/S) and justify why thresholds vary with intended operational decision-support use. osti.gov ac.uk

- Explicitly incorporate measurement uncertainty into decision logic (error bars, interval overlap, likelihood weighting). osti.gov

- Differentiate metrics suitable for Tier U (physics/consistency) versus higher tiers (emergent network congestion and feeder loading extremes). osti.gov

- Include anti-overcalibration check (held-out days, separate calibration/validation windows, or cross-validation), and justify necessity for stochastic ABM settings. arxiv.org

- Present tier-by-tier uncertainty workflow using Monte Carlo NMC=10,000 per tier plus LHS robustness check. osti.gov

- Explicitly state uncertain inputs (alpha, beta, lambdaEV, z) and classify each as aleatory or epistemic with justification. osti.gov

- Explain how uncertainty is propagated to SRQ uncertainty bands (e.g., empirical quantiles over MC runs) and what is reported per tier. osti.gov

- Include concrete sensitivity-computation plan and compare LHS results against MC to detect sampling artifacts. osti.gov

- Explain how sensitivity results prioritize additional measurements or experiment redesign under downtime/budget constraints, with at least one explicit example. osti.gov

- Explain how sensitivity results constrain calibration to reduce overfitting (focus influential parameters, freeze weak ones, cross-validation). arxiv.org

- Specify governance roles for experimentalists, model developers, code developers, and end users across experiment design, model/code changes, and acceptance decisions. osti.gov ac.uk

- Include pre-specified workflow preventing calibration/validation leakage (locked validation data, separated windows, change-control approvals). arxiv.org

- Include numerical verification checks for each simulator component (power-flow convergence, ABM time-step sensitivity, coupling time alignment), each with pass/fail check. osti.gov

- Include plan for configuration management across tiers (tier-specific configurations/parameter sets) and promotion criteria defining what is fixed vs changeable. osti.gov

- Describe how validation results are communicated as defensible predictive credibility claims with explicit validity domain/limitations, and include at least one independent review/red-team mechanism. osti.gov

What's Next

Cite This Work

@misc{unipat2026uniscientist,

title = {UniScientist: Advancing Universal Scientific Research Intelligence},

author = {Baixuan Li and Jialong Wu and Yida Zhao and Wendong Xu and Xuanzhong Chen and Huifeng Yin and Liang Chen and Wentao Zhang and Kuan Li},

year = {2026},

url = {https://unipat.ai/blog/UniScientist}

}

References

- FrontierScience: Evaluating AI's Ability to Perform Expert-Level Scientific Tasks. arXiv preprint, 2026. arXiv:2601.21165

- DeepResearch Bench: A Comprehensive Benchmark for Deep Research Agents. arXiv preprint, 2025. arXiv:2506.11763

- DeepResearch Bench II: Diagnosing Deep Research Agents via Rubrics from Expert Report. arXiv preprint, 2026. arXiv:2601.08536

- ResearchRubrics: A Benchmark of Prompts and Rubrics for Evaluating Deep Research Agents. arXiv preprint, 2025. arXiv:2511.07685